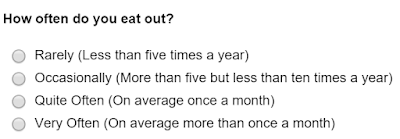

It is quite common to come across surveys that ask questions similar to the one below.

That the survey publisher, nor for that matter the respondent would find anything particularly wrong with such a question is perhaps the nub of the problem.

The problem is that the answer options, Rarely, Occasionally, Quite Often and Very Often are that they are all subjective answers so what the survey publisher may have considered to be Occasionally the respondent may consider to be Quite Often or even Very Often.

It is okay to use subjective answer options providing they are quantified so that everyone is using the same definition, for example.

In this way it doesn't matter that the respondent has a different definition of what Occasionally means as it is clearly defined.

However, it is also important that the respondent can answer accurately which if they wanted to respond that they eat out five times a year or on average eleven times a year then they would not be able to answer accurately as there is no answer option that specifically caters for those frequencies.

It is also important to note that in the above example Quite Often is qualified but unlike the other answer options it is a very precise answer, whereas the other answer options cover much wider groups.

It is therefore always better to ensure that respondents can answer accurately and that the groupings are relatively consistent such as the example below: